API Routes

Choose your AI inference route – pay only for what you use (per token):

Loading available API routes

What's included in an API Blib?

Infrastructure & compliance – fully managed, secure, and regulation-ready from day one.

- No GPU needed – pure API, no hardware management

- No OS & no security issues – fully managed infrastructure

- Full region control – choose EU, DE or specific country endpoints

- 🇪🇺 EU-hosted, GDPR compliant infrastructure

- ISO/IEC 27001 certified 🇩🇪 data centers

- No prompt or completion logging – stateless RAM-only inference, in-out-forget. Billing metadata retained per tax law

-

OpenAI Chat Completions API compatible – drop-in replacement for

/v1/chat/completions, use any SDK - Pay-per-token pricing – no idle costs, no minimum commitments

Smart inference & media – built-in intelligence that handles edge cases so you don't have to.

- High-speed inference – optimized vLLM backends with load balancing

- Free system prompt – up to 1,024 tokens, set via management dashboard

- Guaranteed JSON mode – valid JSON or no charge

- Reasoning + JSON mode – auto 2-call strategy when model can't do both at once

- Thinking rescue – model stuck in reasoning? Auto-detected and recovered

- Auto context compression – auto-summarized when exceeding context window, no hard rejects

- Audio & vision support on multimodal models

- PDF vision support – PDFs auto-converted to page images, zero pre-processing

- Image auto-optimization – metadata stripped, auto-resized, safety-validated

Security & resilience – hardened, self-healing, always on.

- Hardened API surface – dangerous params blocked, injection vectors eliminated

- SSRF-safe image fetching – server-side validation, HTTPS-only, no private IP leaks

- Automatic failover & multi-endpoint redundancy

- Self-healing endpoints – auto-detected failures, health-verified before re-entry

Quick Start

Use any OpenAI-compatible SDK. Just point it to your Trooper.AI route endpoint:

curl https://router.trooper.ai/v1/chat/completions \

-H "Authorization: Bearer YOUR_TROOPER_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "clara",

"messages": [{"role": "user", "content": "Hello!"}],

"max_tokens": 512

}'Why GPU-less LLM Inference Beats Self-Hosting

Running large language models on your own infrastructure means managing GPUs, driver updates, CUDA versions, model weights, scaling, and security patches — all before a single token is generated. With API Blibs you skip every layer of that stack. Our fully managed LLM inference endpoints give you access to state-of-the-art open-source models — Google Gemma 4, Mistral Ministral 3, and NVIDIA Nemotron 3 Nano — without provisioning a single GPU. Requests are processed on optimized vLLM backends with automatic load balancing, delivering consistent low-latency responses even under heavy traffic. No idle GPU costs when you're not calling the API, no ops burden, no surprise bills — just pure inference on demand.

For teams evaluating self-hosted LLM deployments versus managed AI inference, the math is straightforward: API Blibs remove the entire GPU procurement and MLOps layer while giving you the same models, the same quality, and faster time-to-production.

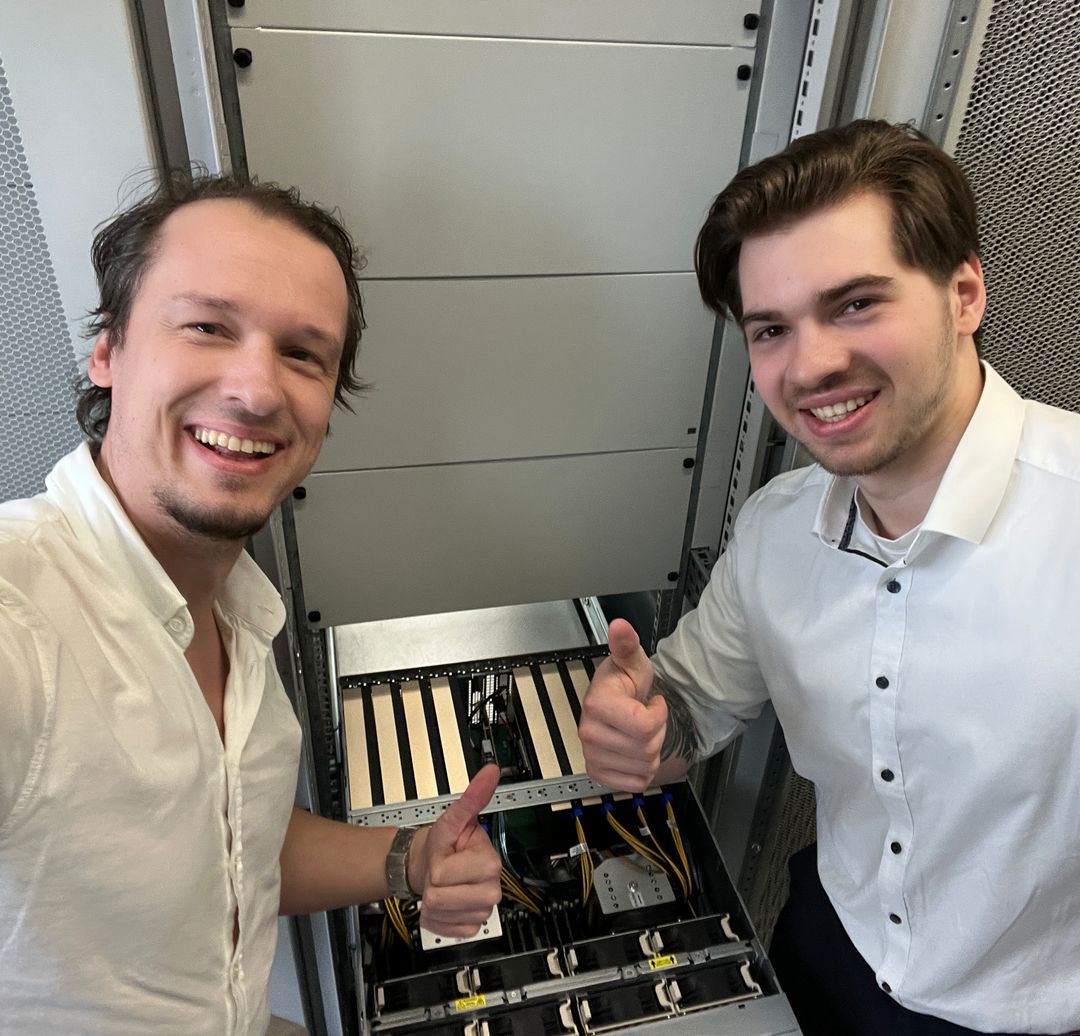

Reliable Hardware, Built by Experts

Behind every API Blib is enterprise-grade, upcycled hardware maintained by our own team. Here, Markus and Jaimie are racking an NVIDIA A100 cluster in one of our ISO/IEC 27001-certified colocation data centers in Germany — the same GPU servers that power your inference requests. We upcycle high-performance components into optimized inference rigs, extending hardware lifecycles while reducing e-waste. We don't resell third-party capacity; we own and operate our own hardware in colocation data centers in Germany and the Netherlands, so we can guarantee performance, security, and data residency at every layer of the stack.

OpenAI Chat Completions API Compatible — Migrate Your AI Stack in Minutes

API Blibs are 100% compatible with the OpenAI Chat Completions API format (/v1/chat/completions). If your application already uses the OpenAI SDK — Python, Node.js, or any HTTP client — switching to Trooper.AI is a one-line change: update the base URL and API key. You get the same endpoint, the same request and response schema, and full support for streaming, JSON mode, function calling, and multimodal inputs. No code rewrite, no new abstractions, no vendor lock-in — your integration stays portable and you stay in control.

Looking for an OpenAI API alternative hosted in Europe? API Blibs give you equivalent Chat Completions API functionality with EU data residency and transparent per-token pricing.

GDPR-Compliant AI Inference Hosted in the EU

Every API Blib route runs exclusively on ISO/IEC 27001-certified colocation data centers in Germany and the European Union. Your prompts and completions are processed in RAM only — fully stateless, no prompt or completion logging, no storage, no model training on your data. Billing metadata is retained as required by tax law. This architecture makes API Blibs a strong choice for regulated industries including healthcare, legal tech, fintech, and public sector, as well as any business where data residency and GDPR compliance are non-negotiable.

Need country-level routing? Choose a specific jurisdiction — Germany, the Netherlands, or broader EU — and your requests will never leave that region. Combined with our hardened API surface and SSRF-safe image fetching, you get an AI inference layer that meets enterprise security requirements out of the box.

Predictable Per-Token Pricing — All Costs Shown Upfront

With API Blibs you pay only for the tokens you consume — input and output, billed per million tokens. No setup fees, no monthly minimums, no charges for idle time. Prepay credits at your own pace and your budget is drawn down only when you make actual API calls. On top of that, every monthly campaign adds bonus credits to your top-up — the exact percentage depends on the current promotion. This makes it simple to forecast costs whether you're running a customer-facing chatbot, a document extraction pipeline, or large-scale batch classification.

Compare that to GPU rental where you pay by the hour regardless of utilization, or proprietary API providers with complex pricing tiers. API Blibs give you transparent, token-based billing from the first token to the last.

API Blibs vs. the Competition

Choosing a managed LLM inference provider in Europe means balancing price, data residency, and operational simplicity. Here is how API Blibs compare to major cloud-based alternatives.

| Trooper.AI API Blibs | Competition (typical) | |

|---|---|---|

| EU data residency | Yes – default; every request processed in 🇪🇺 EU / 🇩🇪 DE | Varies – EU regions may be available, but can be limited to certain plans, require eligibility approval, or route cross-region |

| Data retention | No prompt/completion logging – stateless RAM-only inference; billing metadata retained per tax law | Configurable – some providers retain data by default for abuse monitoring or logging; opt-out may be required |

| Country-level routing | Yes – choose DE, NL or broader EU | Varies – regional deployment may be available, but not all models in every region; country-level control often unavailable on standard plans |

| Pricing model | Per-token in €, no minimums, prepaid credits + promo credits on top | Typically per-token in $; some providers use complex pricing tiers, provisioned throughput units, or priority premiums |

| Additional costs | Transparent – token-based billing, no infrastructure or setup fees | Additional charges common for add-on services, fine-tuned model hosting, platform tooling, and infrastructure overhead |

| API compatibility | Yes – 100 % OpenAI Chat Completions API compatible, one-line migration | Varies – some offer OpenAI-compatible endpoints, others use proprietary APIs that require code changes |

| Setup complexity | Low – API key + base URL, done | Can be high – may require cloud subscriptions, resource groups, IAM configuration, and manual model access requests |

| Vendor lock-in | Low – OpenAI Chat Completions API compatible, switch anytime | Low to high – ranges from portable standard APIs to deep ecosystem lock-in with proprietary tooling |

| Built-in features | Auto context compression, PDF vision, thinking rescue, guaranteed JSON, SSRF-safe image fetch | Feature sets vary; typically batch APIs, prompt caching, guardrails, and RAG tooling as paid add-ons |

| Certifications | ISO/IEC 27001 🇩🇪 colocation data centers | Major providers typically hold SOC 2, ISO 27001, and regional certifications |

| Best for | EU-first teams that want zero-config, GDPR-compliant inference at transparent prices | Teams already embedded in a specific cloud ecosystem or needing a broader API surface beyond chat completions |

As of April 2026. "Competition" reflects common patterns across major managed LLM inference providers. Individual offerings vary. No guarantee of accuracy or completeness.

Bottom line: Major cloud providers offer EU data residency — but it may come with eligibility requirements, additional costs, or ecosystem lock-in. API Blibs give you EU-hosted, GDPR-compliant inference out of the box, with minimal setup friction and transparent token-based billing.

Supported Models — Open-Source LLMs Optimized for Production

API Blibs give you access to carefully selected open-source models, optimized for production workloads on our vLLM inference backends. Each model is chosen for its price-to-performance ratio, EU language coverage, and license clarity.

liv — Google Gemma 4

The most affordable route — a compact multimodal model that handles text, images, audio, and reasoning in a single call. Ideal for high-volume workloads where cost per token matters most, from classification and summarization to image captioning and audio transcription.

clara — Mistral Ministral 3

A fast vision-first model built for throughput. Strong EU language performance, multi-image analysis, and structured extraction at a mid-range price point — perfect for document processing, OCR pipelines, and customer-facing chatbots that need to see.

nikola — NVIDIA Nemotron 3 Nano

The reasoning powerhouse. A mixture-of-experts architecture that delivers deep reasoning and strong coding ability at efficient inference cost. Best suited for code generation, complex reasoning chains, function calling, and agentic workflows.

All models are served via OpenAI-compatible endpoints. Switch between routes by changing the model parameter — no code changes required.

LLM API Use Cases for European Businesses

Document Extraction & RAG Pipelines

Feed PDFs, images, and scanned documents into vision-enabled routes like clara or liv. API Blibs auto-convert PDFs to page images and normalize image inputs — your RAG pipeline receives clean, structured data without pre-processing steps. Combined with guaranteed JSON mode, you get reliable structured output for downstream indexing.

Customer-Facing Chatbots & Virtual Assistants

Deploy AI-powered chat with sub-second latency and full GDPR compliance. Set a free system prompt via the management dashboard, use function calling for backend integration, and let auto context compression handle long conversations without hitting context limits. Zero data retention means your customers' conversations are never stored.

Code Generation & Developer Tools

Route complex coding tasks to nikola for deep reasoning and accurate function calling. The OpenAI-compatible API integrates directly with developer toolchains — VS Code extensions, CI/CD pipelines, code review bots — with a single base URL change.

Multimodal Workflows — Vision, Audio & PDF

Process images, audio files, and PDFs in a single API call. liv handles all three modalities; clara specializes in high-resolution vision tasks. Images are auto-optimized (metadata stripped, resized, SSRF-validated), and PDFs are converted to page images server-side. No client-side pre-processing needed.

Batch Classification & Data Enrichment

Run high-volume classification, tagging, sentiment analysis, or entity extraction at scale. Per-token pricing with no idle costs means you pay only when processing. Combine with guaranteed JSON mode for machine-readable output that feeds directly into your data pipeline.

Frequently Asked Questions About API Blibs

Is my data stored or used for training?

No. API Blibs use a fully stateless, RAM-only architecture. Your prompts and completions are processed in memory and discarded immediately after the response is returned. No prompt or completion logging, no storage, no model training on your data. Billing metadata (token counts, transaction IDs) is retained as required by tax law.

Can I use function calling and tool use?

Yes. All API Blib routes support OpenAI-compatible function calling. Define your tools in the standard tools parameter and the model will return structured tool calls in the response. Works with all routes.

What happens if my input exceeds the context window?

Instead of rejecting your request, API Blibs automatically compress the middle of the conversation to fit within the model's context window. You get a complete response without losing the beginning or end of your conversation thread.

Do you support streaming?

Yes. Standard SSE streaming via the stream: true parameter, fully compatible with the OpenAI SDK streaming interface.

How do I switch from OpenAI to Trooper.AI?

One-line change. Update your base_url to https://router.trooper.ai/v1 and replace your API key. The request format, response schema, and streaming behavior are identical.

Which EU regions are available?

You can route requests to Germany (DE), the Netherlands (NL), or broader EU endpoints. Select your preferred region in the management dashboard or via the API.

What if the model gets stuck in a reasoning loop?

API Blibs include thinking rescue — we detect when a model enters a reasoning loop and auto-recover, ensuring you always receive a usable response instead of a timeout or empty reply.

Is guaranteed JSON mode really guaranteed?

Yes. When you request JSON output, we validate the response structure. If the model fails to produce valid JSON, you are not charged for that request.

Do I need to pre-process images or PDFs before sending them?

No. Images are automatically normalized (metadata stripped, resized to the model's maximum resolution, validated for security). PDFs are converted to page images server-side. You send raw files; we handle the rest.

What certifications do your data centers have?

All infrastructure runs in ISO/IEC 27001-certified colocation data centers in Germany and the EU. Combined with GDPR compliance, no prompt or completion logging, and a hardened API surface, API Blibs meet enterprise security requirements out of the box.

Integration Guides — Connect Your Stack to API Blibs

Python (OpenAI SDK)

from openai import OpenAI

client = OpenAI(

base_url="https://router.trooper.ai/v1",

api_key="YOUR_TROOPER_KEY"

)

response = client.chat.completions.create(

model="clara",

messages=[{"role": "user", "content": "Summarize this document."}],

max_tokens=1024

)

print(response.choices[0].message.content)Node.js (OpenAI SDK)

import OpenAI from "openai";

const client = new OpenAI({

baseURL: "https://router.trooper.ai/v1",

apiKey: "YOUR_TROOPER_KEY",

});

const response = await client.chat.completions.create({

model: "nikola",

messages: [{ role: "user", content: "Write a unit test for this function." }],

max_tokens: 2048,

});

console.log(response.choices[0].message.content);LangChain (Python)

from langchain_openai import ChatOpenAI

llm = ChatOpenAI(

base_url="https://router.trooper.ai/v1",

api_key="YOUR_TROOPER_KEY",

model="clara",

max_tokens=1024

)

response = llm.invoke("Extract all dates from the following text: ...")

print(response.content)LlamaIndex

from llama_index.llms.openai_like import OpenAILike

llm = OpenAILike(

api_base="https://router.trooper.ai/v1",

api_key="YOUR_TROOPER_KEY",

model="nikola",

max_tokens=2048

)

response = llm.complete("Explain the EU AI Act in simple terms.")

print(response.text)cURL with Vision (Image Input)

curl https://router.trooper.ai/v1/chat/completions \

-H "Authorization: Bearer YOUR_TROOPER_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "clara",

"messages": [{

"role": "user",

"content": [

{"type": "image_url", "image_url": {"url": "https://example.com/invoice.png"}},

{"type": "text", "text": "Extract all line items from this invoice as JSON."}

]

}],

"max_tokens": 2048,

"response_format": {"type": "json_object"}

}'AI Compliance for German & EU Companies

The EU AI Act — What It Means for Your AI Infrastructure

The EU AI Act (Regulation 2024/1689) becomes broadly applicable on 2 August 2026, introducing the world's first comprehensive legal framework for artificial intelligence. For companies operating in Germany and the EU, this means new obligations around transparency, documentation, and risk management — with penalties of up to €35 million or 7 % of global annual turnover.

While the Act primarily targets providers and deployers of high-risk AI systems (such as AI used in recruitment, credit scoring, or critical infrastructure), every company using AI should understand where their systems sit on the risk pyramid — and ensure their inference infrastructure supports compliance.

Why Your Inference Provider Matters

Even for minimal- and limited-risk AI use cases, the EU AI Act emphasizes transparency and data governance. Choosing an inference provider that operates within the EU, retains no data, and provides clear documentation simplifies your compliance posture:

- Data residency: The Act encourages processing within the EU. API Blibs run exclusively on ISO/IEC 27001-certified data centers in Germany and the EU — no data leaves the region.

- No prompt or completion logging: API Blibs use stateless, RAM-only inference. Prompts and completions are never stored, eliminating concerns around data logging, retention periods, and subject access requests under GDPR. Billing metadata is retained as required by tax law.

- Transparency: Clear per-token pricing, documented model specifications, and a hardened API surface make it straightforward to document your AI supply chain — a key requirement for Auftragsverarbeitung (data processing agreements) under GDPR and the upcoming AI Act transparency obligations.

- No model training on your data: Your inputs are never used to train or fine-tune models. Full data separation by design.

GDPR + AI Act: Dual Compliance

German companies face a dual compliance burden: GDPR (in effect since 2018) and the AI Act (phased enforcement through 2027). Both frameworks require you to demonstrate that personal data is processed lawfully, transparently, and with appropriate safeguards. Using a US-based inference provider without EU data residency creates unnecessary regulatory surface area — you must rely on Standard Contractual Clauses, assess adequacy decisions, and document cross-border data flows.

API Blibs eliminate this complexity: all processing happens within the EU, with no prompt or completion logging and ISO-certified colocation infrastructure. Your Datenschutzbeauftragter (data protection officer) can document a clean, EU-only data flow with no third-country transfers.

BaFin, Healthcare & Regulated Industries

For companies in regulated sectors — fintech (BaFin-regulated), healthtech, legal tech, public sector — the bar is even higher. Auditors expect:

- Demonstrable data residency within the EU or specific member states

- No data leakage to third-party systems or training pipelines

- Clear documentation of the AI supply chain and subprocessors

- Incident response and failover procedures

API Blibs address all four: country-level routing (DE, NL), no prompt or completion logging (billing metadata retained per tax law), published model specifications, and automatic failover with self-healing endpoints.

Getting Started with Compliant AI Inference

You don't need a lengthy procurement cycle to deploy GDPR- and AI Act-ready LLM inference. Create a Trooper.AI account, top up prepaid credits, and start making API calls — all infrastructure is already certified, all data stays in the EU, and there is nothing to configure on the compliance side.

For Auftragsverarbeitungsvertrag (AVV / DPA) requests or questions about your specific compliance requirements, contact us at [email protected] or call +49 6126 9289991.

Your selected API Route:

PAYMENT – GOOD TO KNOW:

You are billed per token used, charged against your prepaid budget.

No idle costs – you only pay when you make API calls.

Official invoice on next day. VAT already included if applicable.

NO REFUNDS! Read the full payment docs.